Most AI products are designed to feel certain. Clean outputs. Confident phrasing. Seamless delivery. The design goal, often implicit, is to hide the seams — to make the model feel less like a probabilistic system and more like a knowledgeable colleague who always has an answer.

This is a design choice. And in many cases, it’s the wrong one.

Why products default to confidence

The overconfidence of AI products isn’t accidental. It reflects a set of compounding pressures: marketing wants AI to feel impressive; engineering teams measure output quality in ways that reward fluency; design teams are brought in late and inherit interfaces built around the model’s strengths, not its failure modes.

The result is products where uncertainty is hidden, understated, or absent entirely. The model’s output lands with the same visual weight and tone whether it’s highly confident or essentially guessing. From a user’s perspective, there’s no signal. Every response looks the same.

What overtrust costs users

In human-automation research, the tendency to follow or over-rely on automated systems — even when they’re wrong — is called automation bias (Parasuraman & Riley, 1997). It was first studied in aviation and process control, but it applies anywhere humans delegate judgement to automated systems.

Dzindolet and colleagues (2003) showed that trust in automation tends to be poorly calibrated in both directions: people over-rely when systems are unreliable, and under-rely when systems are more capable than they appear. The overtrust direction is more dangerous — it’s the one where people act on bad information without realising they’re doing it.

For AI products, the consequences are concrete. A user who trusts a summarisation tool without knowing it’s prone to confident hallucination acts on false information. A clinician who relies on a diagnostic assistant without knowing it performs poorly on atypical cases misses things they might otherwise have caught. A customer service agent who follows an AI-suggested response without knowing the model was uncertain gives wrong answers with confidence borrowed from the system.

The interface contributed to each of those failures, not just the model.

The design challenge

Signalling uncertainty in AI outputs is harder than it sounds, for two reasons.

First, users don’t have a reliable intuitive model of what AI uncertainty means. Showing a confidence percentage introduces false precision and often isn’t meaningful to non-technical users. Showing nothing hides information that matters. The middle ground — uncertainty communication that’s calibrated, comprehensible, and doesn’t make the product feel broken — requires actual design work.

Second, there’s a real product tension to navigate. Interfaces that hedge constantly feel useless. Designing for humility doesn’t mean every response carries a disclaimer. It means designing appropriate signals for when uncertainty is meaningful — which requires knowing when that is.

Amershi and colleagues’ Guidelines for Human-AI Interaction (2019) include the principle of making clear why a system did what it did. But calibrated uncertainty communication is a prerequisite for that. Users can’t evaluate AI behaviour if they don’t know when to be sceptical.

Patterns for humble AI

Confidence gradients over binary states. Rather than “here’s the answer” versus “I don’t know,” design for a spectrum. Distinguish between high-confidence outputs that can be acted on, lower-confidence outputs worth reviewing, and situations where uncertainty should be surfaced explicitly. The visual treatment, copy, and interaction model can all shift accordingly.

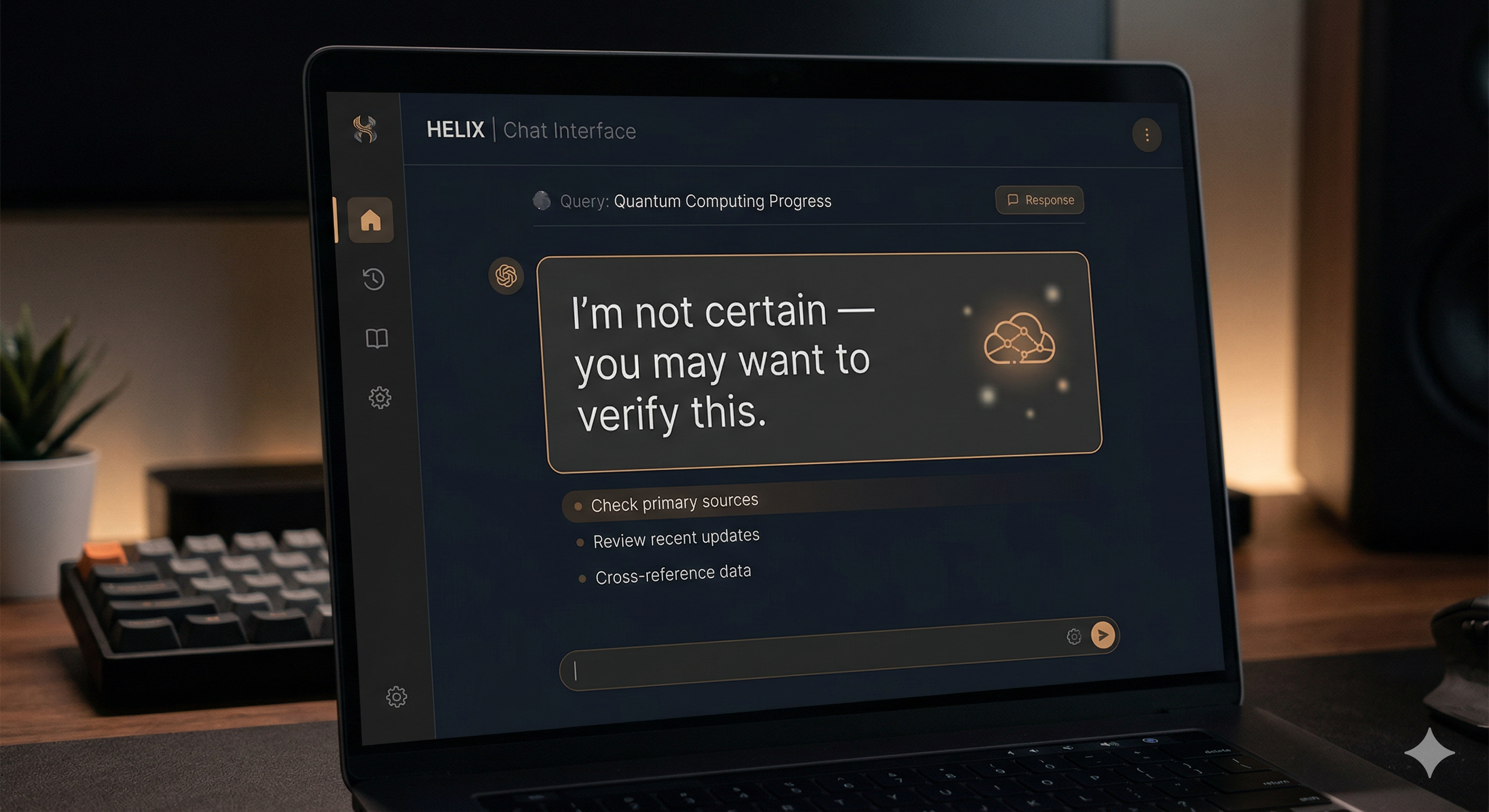

“I’m not sure” as a first-class state. Most AI interfaces are designed around the success case. The uncertain or low-confidence case is an afterthought, often inheriting the same visual treatment as a confident response. Designing explicitly for uncertain states — what the component looks like, what copy it carries, what action it invites — is the difference between hiding uncertainty and handling it gracefully.

Ask rather than assume. When a model lacks context that would meaningfully improve its output, the right response is often to ask for it. This is both a UX pattern and a guardrail — a system that asks when uncertain is less likely to confidently produce something wrong. Buçinca and colleagues (2021) showed that cognitive forcing functions — interventions that slow users down before they act on AI outputs — can meaningfully reduce overreliance without destroying usefulness.

Graceful degradation. Design the experience of the model at the edge of its knowledge. What does it say? What does it offer instead? A model that responds with “I’m not confident here — you might want to verify this” is more trustworthy than one that answers everything with equal fluency. The goal is not to appear less capable — it’s to appear reliably honest about capability.

Humble AI is a safety mechanism

The framing of AI uncertainty as a UX problem is useful, but it undersells what’s at stake. Miscalibrated trust in AI systems has downstream consequences that go well beyond friction or dissatisfaction. It contributes to decisions made on false information, errors compounded by automation, and — at scale — a general erosion of the trust signal that makes AI worth using at all.

Shneiderman (2020) argues for human-centred AI as reliable, safe, and trustworthy — three properties that are inseparable in practice. You cannot have trustworthy AI without reliability, and you cannot have perceived reliability without honest communication about uncertainty. The model alone can’t deliver that. The interface has to do work.

Designing for humility isn’t about making AI feel less capable. It’s about making AI feel honest — which requires that users understand when to depend on it and when to look again.

That’s not a model problem. It’s a design problem.

References

- Amershi, S., Weld, D., Vorvoreanu, M., Fourney, A., Nushi, B., Collisson, P., & Horvitz, E. (2019). Guidelines for human-AI interaction. Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems, 1–13.

- Buçinca, Z., Malaya, M. B., & Gajos, K. Z. (2021). To trust or to think: Cognitive forcing functions can reduce overreliance on AI in AI-assisted decision making. Proceedings of the ACM on Human-Computer Interaction, 5(CSCW1), 1–21.

- Dzindolet, M. T., Peterson, S. A., Pomranky, R. A., Pierce, L. G., & Beck, H. P. (2003). The role of trust in automation reliance. International Journal of Human-Computer Studies, 58(6), 697–718.

- Parasuraman, R., & Riley, V. (1997). Humans and automation: Use, misuse, disuse, abuse. Human Factors, 39(2), 230–253.

- Shneiderman, B. (2020). Human-centered artificial intelligence: Reliable, safe & trustworthy. International Journal of Human–Computer Interaction, 36(6), 495–504.